JC-STAR要件化時代に問う「Dynamic Resilience by Architecture」

太陽光発電、蓄電池、EMS、EV充電器は、もはや単独の電気設備ではありません。

PCSは出力制御サーバや監視クラウドとつながり、EMSは需要データと発電データを集約し、蓄電池は市場価格やDR指令を見ながら充放電し、EV充電器はOCPPやAPIを通じて課金、認証、負荷制御と結びつきます。

分散型電源は、電力設備であると同時に、IPネットワークにつながった産業IoTでもあります。

そのため、サイバーセキュリティ対策の強化は避けて通れません。

エネルギーIoT化、クラウド化、遠隔制御化、DER/VPP化が進むほど、攻撃者にとっての入口は増えます。

太陽光発電所の遠隔監視機器が不正アクセスを受け、不正送金の踏み台として悪用された国内事例もありました。

それが直接的に系統を揺らしたわけではないとしても、電力設備に付随する通信機器がインターネット上の攻撃対象になり得ることは明らかです。

一方で、ここで注意したいのは、「認証済み製品を使えば安全である」という短絡です。

JC-STARのようなラベリング制度は重要です。

調達者が製品の最低限のセキュリティ機能を確認しやすくなり、メーカーにも説明責任を促します。

しかし、エネルギーシステムの安全性は、製品単体の認証だけでは決まりません。

実際の安全性は、機器の接続構成、権限管理、クラウド依存度、アップデート運用、現場の保守体制、障害時の振る舞い、侵害後の隔離能力によって大きく変わります。

本稿では、JC-STAR要件化と系統連系技術要件化の流れを肯定的に受け止めつつ、静的な認証中心の考え方だけでは不十分である理由を整理します。

これは認証を否定する議論ではありません。

認証は入口として必要です。

ただし、クラウドネイティブ化、OTA、API経済圏、AI生成コード、OSS依存によって「出荷時状態」の意味が崩れつつあるなかで、動的に変わり続ける電力インフラをどう安全に保つかが問われています。

そこで、太陽光発電事業者、EPC、O&M、EMS事業者、EV充電関係者、政策関係者が共有すべき設計思想として、Dynamic Resilience、Resilience by Architecture、Local Autonomy、Loose Coupling、Offline-first、Fail-safe、Graceful Degradationを軸にした分散型エネルギーシステムを考えます。

JC-STARとは何か

JC-STARは、IPAが運営する「セキュリティ要件適合評価及びラベリング制度」です。

正式には Labeling Scheme based on Japan Cyber-Security Technical Assessment Requirements とされ、IoT製品について、一定のセキュリティ要件への適合性を確認し、調達者や利用者が確認できる形で可視化する制度です。

2024年に制度構築方針が示され、2025年には最低限の基準である★1の申請受付が始まっています。

JC-STARの対象は、IPを使って通信するIoT製品です。

インターネットに直接つながる機器だけでなく、ゲートウェイやルーターを介して外部と接続し得る機器も対象になり得ます。

太陽光発電や蓄電池の文脈では、PCS、通信モジュール、EMS、ローカルコントローラ、通信ゲートウェイ、遠隔監視装置などが論点になります。

ただし、制度上の対象やレベル、適用範囲は今後の整理が続く部分もあるため、実務では最新の公表資料、一般送配電事業者の要件、メーカーの取得状況を確認する必要があります。

JC-STARには複数のレベルがあります。

★1はIoT製品共通の最低限のセキュリティ要件で、自己適合宣言を基本とします。

★2以上は製品類型ごとの要件が加わり、より高いレベルでは第三者評価が前提になります。

重要なのは、★1は「最低限の入口」であって、特定の発電所、工場、充電ネットワーク、VPP基盤における完全な安全性を保証するものではないという点です。

IPA自身も、適合ラベルは完全・完璧なセキュリティを保証するものではなく、想定外の脅威や個別の運用環境までは包含しないと説明しています。

2027年以降の系統連系要件化という現実

分散型電源に対するサイバーセキュリティ要件は、すでに系統連系技術要件の中で扱われています。

外部ネットワークからの影響を最小化すること、データ保存・転送を行う機器へのマルウェア対策、セキュリティ管理責任者と緊急連絡先の通知などが求められてきました。

そこに、JC-STAR適合製品の活用を組み込む方向で議論が進んでいます。

公開資料や業界報道では、2027年4月以降、太陽光発電や蓄電池などの分散型電源について、PCS等にJC-STAR★1取得製品を用いることが系統連系手続き上の要件になっていく見込みとされています。

50kW未満の低圧・小規模設備についても対象に含める方向で整理が進んでおり、一定の経過措置が設けられる可能性があります。

ただし、具体的な対象機器、適用開始時期、既存設備更新時の扱い、EMSや通信ゲートウェイまで含む範囲については、案件時点の制度文書を慎重に確認すべきです。

この動きには合理性があります。

小規模太陽光や家庭用設備は、従来、大規模発電所ほど厳格なサイバーセキュリティ運用を求められてきませんでした。

しかし、小規模設備であっても数が集まれば大きな出力になります。

低圧PCS、家庭用蓄電池、住宅用EMS、EV充電器がクラウドやアグリゲーターに接続され、同じ指令で同時に動くようになれば、個々の容量が小さいことは安全の十分条件ではありません。

なぜ規制強化が必要になったのか

規制強化の背景には、単なる形式的な管理強化ではなく、電力システムの構造変化があります。

第一に、太陽光発電と蓄電池が急増しています。

第二に、それらが遠隔監視、遠隔設定、出力制御、需給調整、市場取引と結びつき始めています。

第三に、アグリゲーター、VPP、DR、EV充電制御などにより、分散した機器を束ねて動かす仕組みが広がっています。

これは電力システムにとって有益です。

分散型電源を適切に制御できれば、再生可能エネルギーの導入量を増やし、ピークを抑え、需要側資源を活用できます。

しかし同時に、攻撃者にとっては、分散した機器を一斉に操作する経路が生まれることでもあります。

PCSの出力停止、不正な充放電、EV充電の同時起動、出力制御指令の改ざん、監視データの偽装、クラウドAPIの悪用は、個別設備の問題を超えて、局所的な系統運用や需給制御に影響を与え得ます。

また、国家レベルの脅威やサプライチェーンリスクも無視できません。

特定国のメーカーを感情的に排除すればよい、という単純な話ではありません。

しかし、通信機能を持つPCSやEMSが長期間にわたりクラウドサービス、OTA、保守アカウント、遠隔操作機能と結びつく以上、調達国、開発体制、保守拠点、ファームウェア署名、ログ開示、脆弱性対応、リモートアクセス権限について説明責任が求められます。

欧州でも太陽光インバータを5G機器に近い重要インフラ部品として扱う議論が進んでおり、地政学と電力制御が切り離せない時代に入っています。

エネルギーIoTはどこで複雑になるのか

現場のリスクは、単一のPCSだけを見ても分かりません。

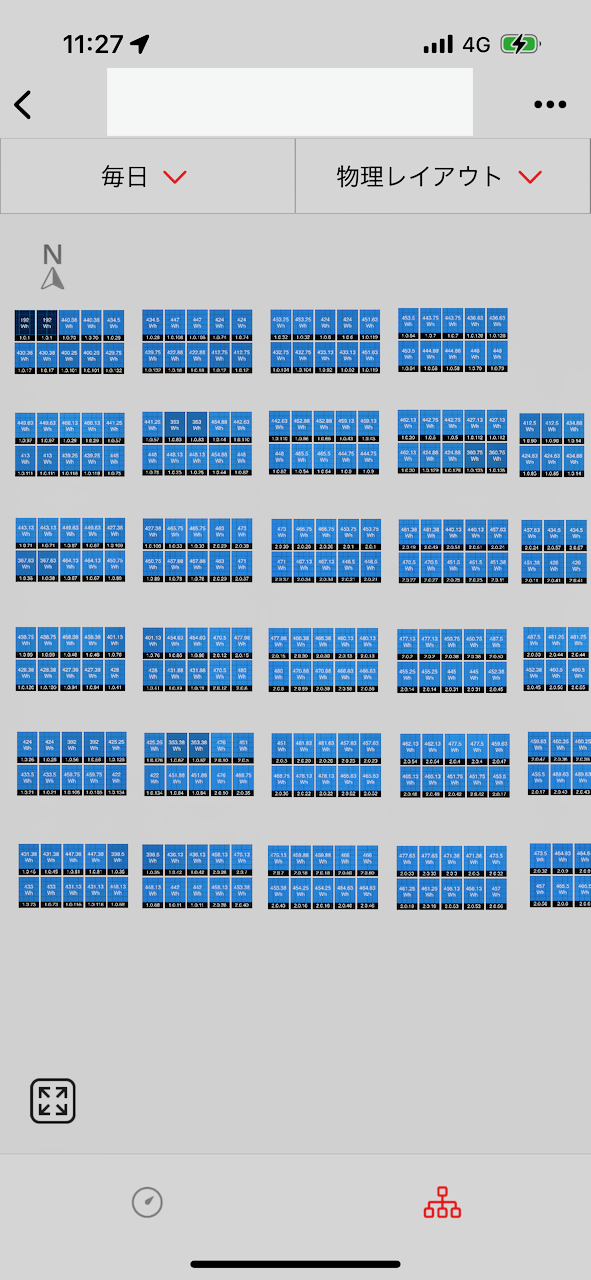

実際の発電所や事業所では、PCS、Smart Logger、データロガー、通信ゲートウェイ、LTEルーター、VPN装置、監視クラウド、施工会社の保守PC、メーカーの遠隔保守アカウント、EMS、BMS、電力会社の出力制御サーバ、OCPP対応EV充電器、課金システム、需要家側のLANが絡み合います。

ここで起きる脆弱性は、製品単体のカタログには表れにくいものです。

初期パスワードが現場で変更されていない。

LTEルーターの管理画面が外部から見える。

保守用VPNの共通アカウントが複数現場で使い回されている。

EMSのAPIキーが長期間ローテーションされない。

クラウド連携のために不要なポートが開いている。

OTA更新が自動で走り、現場の運転条件と関係なく制御ロジックが変わる。

どれも、認証取得時点の製品機能だけでは捉えきれない運用上の問題です。

EV充電器も同じです。

OCPPは相互運用性を高める重要なプロトコルですが、充電器、CSMS、認証、課金、負荷制御が広域に接続されることで、攻撃面は拡大します。

多数の充電器を同時に停止させる、逆に同時に起動させる、認証情報を不正利用する、管理画面を奪う、需要データを偽装するといったリスクは、普及が進むほど現実的になります。

したがって、太陽光、蓄電池、EMS、EV充電器は別々の設備としてではなく、同じエネルギーIoTの一部として扱う必要があります。

静的認証だけではなぜ不十分なのか

認証は必要です。

デフォルトパスワードの禁止、認証機能、アクセス制御、アップデート機能、通信保護、不要インターフェースの無効化、脆弱性対応窓口の明示は、IoT製品として当然満たすべき基礎です。

これらを満たさない製品が電力設備に接続されることは、今後ますます許容されにくくなるでしょう。

しかし、サイバーセキュリティは静的な問題ではありません。

製品は出荷後にネットワークへ接続され、設定変更され、API連携され、クラウド側が更新され、保守担当者が入れ替わり、脆弱性情報が公開されます。

認証を取得した時点の構成と、5年後の現場構成は一致しません。

いまはクラウド側の機能追加、OTAによる制御ロジック変更、API連携先の増減、OSSライブラリの脆弱性、AI生成コードを含む開発プロセスの変化が、製品出荷後にも安全性を揺らします。

「出荷時に適合していた製品」という確認だけで、運用中に姿を変えるシステム全体を評価することはできません。

さらに、脅威は進化します。

攻撃者は製品仕様書を読むだけでなく、実際の施工慣行、保守委託構造、サポート終了機器、公開されたPoC、認証情報の漏えい、クラウドAPIの設計不備を突いてきます。

つまり、認証は最低ラインであり、運用中の安全性そのものではありません。

「認証済みだから安全」という誤解は、むしろ新しい脆弱性を生みます。

EPCが認証ラベルだけを確認してネットワーク設計を省略する。

発電事業者が保守アカウント管理をメーカー任せにする。

O&Mが通信断やクラウド障害時の運転継続手順を持たない。

EMS事業者が中央クラウドの可用性を前提に全設備を密結合させる。

こうした状況では、認証済み製品を使っていても、システム全体は脆いままです。

物理インフラとしての電力を忘れない

ITシステムのセキュリティでは、可用性、機密性、完全性がよく語られます。

もちろん電力設備でもそれらは重要です。

しかし、電力は物理システムです。

継続運転、安全停止、保護協調、周波数維持、電圧維持、島運転、ブラックアウト後の復旧、重要負荷への給電といった、現実の電気的な振る舞いを伴います。

そのため、「全部止めれば安全」とは限りません。

需要家設備では急停止が生産ラインや冷凍設備、医療・通信設備に影響することがあります。

蓄電池やEV充電器では、一斉停止や一斉復帰が需要変動を作る場合があります。

系統側から見れば、分散した小さな機器であっても、同じクラウド指令や同じ障害モードで同時に動けば、物理的な揺らぎになります。

サイバーセキュリティは、画面上のログイン管理だけでなく、電力設備が異常時にどう振る舞うかまで含めて設計する必要があります。

Security by DesignからResilience by Architectureへ

Security by Designは、単に暗号化、認証、ログ、アップデート機能を付けることではありません。

それらは重要な部品ですが、設計思想の全体ではありません。

エネルギーシステムにおけるSecurity by Designとは、攻撃や障害が起きても、危険な状態に遷移せず、局所的な影響に閉じ込め、必要最低限の運転を続けられる構造を最初から作ることです。

ここで重要になるのがDynamic Resilienceであり、Resilience by Architectureです。

これは「侵害されないこと」を前提にするのではなく、「一部が侵害されても全体が崩壊しないこと」を重視する考え方です。

エネルギーインフラでは、完全防御よりも、侵害の検知、隔離、縮退運転、手動復旧、局所自律、運用継続を組み合わせることが現実的です。

電力設備の世界では、昔からフェイルセーフや保護協調という発想があります。

異常時には危険側に倒れない。

一部の故障が全体停止に波及しない。

保護装置は階層的に動き、局所で切り離せる。

サイバーセキュリティも同じです。

電気的な保護協調に、情報システムの保護協調を重ねる必要があります。

つまり、強い製品を選ぶだけでなく、壊れ方、切り離し方、戻し方、観測し続ける方法をアーキテクチャとして組み込むことが重要です。

ではどうするか: 現場で実装できる設計原則

「認証だけでは不十分」で終わると、実務者にとっては「では明日の設計で何を変えるのか」が残ります。

Dynamic Resilienceは抽象的な理想論ではありません。

EPC、O&M、発電事業者、自家消費設備、EV充電、EMSの現場で、いまから確認し、設計に反映できる原則です。

第一の原則は、認証取得より前に攻撃面を減らすことです。

不要な外部公開をしない。

不要なAPIを止める。

不要なポートを閉じる。

LTEルーターの管理画面やPCS監視画面をインターネットへ直接出さない。

共通の保守IDを複数現場で使い回さない。

外部からのInbound通信を最小化し、必要な場合もVPN、送信元制限、多要素認証、権限分離、監査ログを前提にする。

これは高度なセキュリティ製品を入れる前にやるべき、最も費用対効果の高い対策です。

第二の原則は、クラウドを便利な上位機能として使いながら、運転継続の必須条件にしないことです。

監視クラウドが止まってもPCSは保護機能と系統連系要件に従って動く。

DNS障害が起きてもローカルEMSは最低限の制御を続ける。

認証基盤が停止しても、現場の責任者がローカルで安全な操作を行える。

API連携が切れても、発電、蓄電、充電、需要制御は安全側のローカルモードへ移る。

クラウドは最適化と可視化には強力ですが、電力設備の最後の安全弁にしてはいけません。

第三の原則は、制御階層を分けることです。

Level 1はPCS、蓄電池、保護装置などのローカル保護です。

ここでは過電圧、単独運転、異常電流、機器保護など、電気的な安全を最優先します。

Level 2はサイトEMSです。

需要、発電、蓄電、EV充電、負荷制御を、事業所や発電所の範囲で調整します。

Level 3は広域監視、VPP、アグリゲーション、市場連携です。

上位の最適化が壊れても下位の安全は壊れない。

広域指令が異常でもサイトEMSが検証し、サイトEMSが落ちてもPCSローカル保護が働く。

この階層分離が、電気的な保護協調に対応する情報システム側の保護協調です。

第四の原則は、Fail OperationalとGraceful Degradationです。

ITシステムでは異常時に停止することが安全側になる場面が多くあります。

しかし電力設備では、継続運転しながら安全側へ縮退することが重要です。

クラウド断なら固定出力やローカルスケジュールへ移る。

VPP断なら広域指令を停止し、サイト内制御へ戻す。

API断なら外部連携を止め、あらかじめ定義した安全モードで運転する。

EV充電の課金認証が落ちた場合は、全開放でも全停止でもなく、管理者許可の限定ローカルモードを用意する。

全停止だけを安全と考えると、攻撃者にとって「止める」ことが容易な攻撃目標になります。

出力制御でも、同じ問いが生じます。

実運用上見られる設計として、通信が途絶え、出力制御カレンダーや上位指令を取得できない場合に、一律で出力ゼロへ落とすロジックがあります。

系統側から見れば、制御不能な発電を出さないという意味で分かりやすいフェイルセーフです。

しかし、それが電力インフラ全体のレジリエンスとして常に最適かは、別の問題として検討すべきです。

同じロジックが広い範囲に実装されると、通信障害、クラウド障害、認証基盤障害、DNS障害、カレンダー配信障害などが、本来は発電可能な分散電源の同時ゼロ出力を引き起こす可能性があります。

「安全側に倒す」つもりが、大規模な同時停止、需給悪化、復旧時の出力復帰の混乱、攻撃者に狙われる相関障害を作ることもあり得ます。

これは電力会社や制度を批判する話ではなく、異常時の運用設計として、どの失敗モードを許容し、どの失敗モードを避けるのかという問いです。

分散型電源らしい縮退運転を考えるなら、通信断時の唯一の安全策をゼロ出力と決めつけるのではなく、ローカルで観測できる電圧、周波数、逆潮流、負荷状態に基づき、系統を荒らさない範囲で運転を縮退させる選択肢があります。

たとえば、電圧上昇時には有効電力を抑制する。

必要に応じて無効電力制御で電圧維持に寄与する。

逆潮流制限が必要な設備では、ローカル計測で上限を守る。

蓄電池があれば余剰を充電へ逃がす。

自家消費負荷があれば需要内で使う。

周波数や電圧が異常なら安全停止する。

通信が復旧したら、検証済みのカレンダー制御や上位制御へ戻す。

こうした段階的な縮退は、Fail Operational、Dynamic Resilience、Resilience by Architectureの具体例です。

もちろん、これは「通信が切れたら勝手に発電してよい」という意味ではありません。

保護協調、逆潮流制限、電圧逸脱、単独運転防止、系統連系要件、出力制御ルールとの整合は不可欠です。

それでも、通信断時の設計を単純な全停止だけに閉じると、分散型電源が本来持つ局所性と自律性を活かせません。

サイバーセキュリティ規制の議論は、通信や認証の有無だけでなく、障害時に分散電源が系統へどう貢献し、どう迷惑をかけないかという制御設計の議論へ引き上げる必要があります。

自家消費を伴う設備では、さらに一段深い設計が必要になります。

系統と協調して電圧を維持し、逆潮流を抑えられるうちは、通常の系統連系運転を続ける。

通信断や上位制御断が起きた場合は、ローカル計測に基づく縮退運転へ移る。

それでも電圧維持や逆潮流抑制が難しく、系統側へ悪影響を出すおそれがある場合には、全設備を直ちに止めるだけでなく、系統連系側を切り離して、需要家側のローカル負荷、蓄電池、重要負荷だけを守る構成も検討対象になります。

これは単独運転防止や保護協調を軽視する話ではありません。

むしろ、系統へ迷惑をかけないために切り離し、そのうえで需要家側の継続運転能力を確保する、高度なFail Operational設計です。

- 系統と協調して通常運転する。

- 通信断・制御断時は、ローカル計測で縮退運転する。

- 電圧維持や逆潮流抑制が難しい場合は、系統連系側を切り離す。

- 可能なら自家消費、BESS、重要負荷だけをローカルで維持する。

- ローカルでも安全を維持できない場合に停止する。

この考え方は、BESS、マイクログリッド、重要負荷、島運転、災害時継続運転と直結します。

ただし、ここでいう島運転は、法規、系統連系規程、保護リレー、インターロック、接地、切替手順、復電時の同期条件を満たすことが前提です。

サイバーセキュリティ上のレジリエンスを理由に、電気的な安全要件を後回しにしてよい場面はありません。

重要なのは、停止か継続かの二択ではなく、系統側の安全と需要家側の継続性を両立するための遷移状態を、あらかじめ設計しておくことです。

第五の原則は、閉域を目的ではなく手段として扱うことです。

閉域網、VPN、ローカル制御、自律運転は有効です。

しかし、閉域という言葉だけで安心して、共通ID、過大権限、更新不能な機器、横展開しやすいネットワークを放置すれば意味がありません。

本質は、影響範囲を局所化することです。

侵害を前提にし、局所で封じ込め、他サイトや上位クラウドへ横展開させず、復旧単位を小さくする。

閉域はそのための選択肢であって、目的そのものではありません。

第六の原則は、運用による継続監視です。

静的な検査や認証取得だけでなく、運用中に観測し続ける必要があります。

通信量や接続先の変化、出力挙動の異常、APIエラーの増加、ログイン失敗や不審な管理操作、証明書期限、OTA失敗、設定変更履歴、ファームウェア差分を保守対象に含める。

これはI-S3が太陽光発電や蓄電池、EV充電器で重視してきた「運用で品質を保証する」姿勢のセキュリティ版です。

発電量や故障だけでなく、通信と権限の状態もO&Mの対象にするべきです。

認証済みクラウド集中型の危険

クラウドやOTAを否定する必要はありません。

脆弱性を修正するにはアップデートが必要です。

広域監視や需要予測、VPP制御にはクラウドが有効です。

多数の設備を人手だけで見ることは現実的ではありません。

問題は、便利な中央集約を、停止してはならない基盤の唯一の支柱にしてしまうことです。

ここで特に注意したいのは、「認証済みのクラウド集中型」なら安全だ、という別の短絡です。

認証によって調達や運用管理を整理するほど、機器、ID、API、ログ、OTA、監視が一つの基盤へ集まりやすくなります。

その基盤が堅牢であることは重要ですが、集中そのものが巨大な単一点障害を生むことがあります。

単一クラウド障害、OTA事故、認証基盤障害、DNS障害、API rate limit、集中credential漏えい、共通管理画面の侵害は、個別機器の認証とは別の層で全体に波及します。

サイバーセキュリティの名目で、常時クラウド接続、中央認証、集中監視、強制OTAを当然視する流れにも注意が必要です。

それらは適切に使えば有効ですが、同時にベンダーロック、集中障害、統制集中を正当化する道具にもなり得ます。

安全のための管理が、結果として分散型エネルギーの強みを失わせ、単一事業者や単一基盤への過度な依存を作っていないかを点検すべきです。

強制OTAは、セキュリティ修正の観点では魅力的です。

しかし、エネルギー設備では、更新によって制御挙動が変わること自体が運用リスクになります。

更新前の署名検証、ロールバック、段階展開、現場条件との整合確認、更新時間帯の制御、更新失敗時の安全状態を設計する必要があります。

「メーカーがクラウドで更新するから安全」ではなく、「更新が失敗しても危険な状態にならない」ことが重要です。

単一認証基盤への依存も同様です。

SSOや集中ID管理は運用を整理しますが、認証基盤が侵害された場合の影響範囲を限定しなければなりません。

発電所単位、設備単位、権限種別ごとの分離、緊急時のローカル手動操作、監査ログ、権限失効手順が不可欠です。

エネルギーシステムでは、便利な集中管理と、最後に現場で止める・動かす権限のバランスを誤ってはいけません。

サプライチェーンリスクをどう考えるか

海外メーカーを含むサプライチェーンリスクは、純粋な技術性能だけでは語れません。

インバータや通信機器は、長期にわたり電力設備の制御点に置かれます。

そのため、地政学、サプライチェーン、法制度、保守アクセス、クラウド運営国、ファームウェア署名、脆弱性開示体制、調達説明責任が重要になります。

ただし、特定メーカー名だけを挙げて安全・危険を単純に分けるのも危うい態度です。

同じメーカーでも構成によってリスクは変わります。

インターネット直結の遠隔保守を許すのか、閉域網で運用するのか。

クラウド経由で出力制御できるのか、ローカルで承認を必要とするのか。

管理者権限を誰が持つのか。

ログと設定変更履歴を需要家側が確認できるのか。

これらの設計と運用が、メーカー名以上に実効的なリスクを左右する場合も多いのです。

完全排除は現実的でない場面もあります。

既設設備、価格、納期、保守部品、機能要件、施工実績の制約があるからです。

だからこそ、調達では「どこのメーカーか」だけでなく、「どのような接続構成で、誰が、どの権限で、どこから、いつまで、何を操作できるのか」を文書化すべきです。

サプライチェーンリスクは政治的スローガンではなく、運用設計と説明責任の問題として扱う必要があります。

実務で確認すべきこと

太陽光発電事業者やEPCは、今後の案件でPCSのJC-STAR取得状況を確認するだけでなく、通信構成図を標準成果物にすべきです。

PCS、EMS、通信GW、LTEルーター、クラウド、保守端末、OCPPサーバ、API連携先を一枚の図にし、どこに認証境界があるのか、どこに外部接続があるのか、誰が管理者権限を持つのかを明確にする。

これを施工後に作るのでは遅く、設計段階で作る必要があります。

O&M事業者は、発電量低下や機器故障だけでなく、通信と権限の運用を保守対象に含めるべきです。

パスワード変更、アカウント棚卸し、証明書期限、VPN設定、ルーターの公開状態、ファームウェアバージョン、不要サービス、ログ確認、緊急連絡先の更新は、発電所の保守項目です。

サイバーセキュリティは情報システム部門だけの仕事ではなく、現場O&Mの一部になります。

EMS事業者やアグリゲーターは、制御権限の範囲を明確にすべきです。

どの設備に、どの条件で、どの出力範囲まで指令を出せるのか。

指令の真正性をどう検証するのか。

誤指令や不正指令を現場側で拒否できるのか。

通信断時に最後の指令を維持するのか、ローカル制御へ戻すのか。

多数設備を束ねる事業者ほど、単一障害点を作らない設計が求められます。

もう一つ重要なのは、誰が全体責任を持つのかです。

現実の設備では、PCSメーカー、EMSベンダー、EPC、O&M、クラウド事業者、LTE回線、API連携先、アグリゲーター、需要家側情報システムが分かれます。

その結果、製品は認証済み、通信は回線業者の責任、クラウドはSaaS事業者の責任、現場設定は施工会社の責任、運転継続は発電事業者の責任という形で、誰もシステム全体の壊れ方を見ていない状態が起こり得ます。

危険なのは、部品ごとの責任者はいるのに、全体のレジリエンス責任者がいないことです。

したがって、契約や保守仕様では、単に「JC-STAR適合品を使用する」と書くだけでなく、責任分界を明文化すべきです。

外部接続の許可者、管理者権限の保有者、緊急時のローカル操作権限、OTA更新の承認者、ログの閲覧権限、通信断時の一次対応者、クラウド停止時の連絡先、API異常時の切り離し判断者を決めておく。

これは法務上の責任逃れではなく、障害や攻撃が起きたときに現場を迷わせないための運用設計です。

EV充電器の設置・運用では、家庭用と事業用を分けて考える必要があります。

家庭用では過剰に複雑なクラウド依存を避け、ローカルで安全に充電できることが重要です。

事業用や多数台充電では、OCPP、課金、認証、負荷制御、太陽光・蓄電池連携まで含めた設計が必要です。

充電器は単なるコンセントではなく、需要を動かす制御点です。

I-S3が太陽光発電や蓄電池、EV充電器の分野で重視しているのも、机上の適合だけではなく、運用で品質を確認し続ける姿勢です。

中古パネルの選定、MLPEを用いたモジュール単位の可視化、遠隔監視、O&M、小規模分散の現場対応では、出荷時や施工時の検査だけでは見えない劣化、ばらつき、施工差、運用条件を継続的に観測します。

セキュリティも同じです。

認証取得時点の書類だけでなく、運用中のログ、設定変更、通信経路、アカウント、更新履歴、異常時の復旧を見続けることで、初めて実効的な安全性に近づきます。

I-S3が提案するResilience Checklist

分散型エネルギー設備の調達、設計、施工、O&Mでは、JC-STAR取得確認に加えて、アーキテクチャの確認を行うべきです。

たとえば、次の問いに答えられるかどうかです。

- クラウドが停止しても、発電、蓄電、充電、需要制御は安全に継続または縮退できるか。

- DNS障害、認証基盤障害、API断、証明書期限切れのとき、設備はどの状態に遷移するか。

- 出力制御カレンダーや上位指令を取得できないとき、一律ゼロ出力だけでなく、ローカル電圧、周波数、逆潮流、負荷状態に基づく縮退制御を検討しているか。

- 通信復旧時に、多数設備が同時に復帰して需給や電圧を乱さないよう、復帰条件、検証、段階復帰を設計しているか。

- OTA更新が失敗した場合、ロールバックできるか。危険な制御状態にならないか。

- 現場責任者が、緊急時にローカル操作できるか。クラウド認証なしでも最低限の操作が可能か。

- 自家消費、BESS、重要負荷を持つ設備では、系統連系側を安全に切り離し、需要家側だけを限定的に維持する設計を検討しているか。

- 島運転や災害時継続運転を行う場合、単独運転防止、保護協調、復電時同期、切替手順、法規・系統連系要件との整合を明文化しているか。

- 外部からのInbound通信は本当に必要か。必要な場合、送信元、権限、ログ、時間帯は制限されているか。

- 保守権限はメーカー、EPC、O&M、発電事業者、需要家ごとに分離されているか。共通保守IDは残っていないか。

- VPNや閉域網を使う目的は明確か。閉域の内側で横展開しにくい構造になっているか。

- 異常時に全停止だけでなく、固定出力運転、ローカル制御、限定充電、重要負荷優先などの縮退運転が可能か。

- 単一点障害はどこにあるか。単一クラウド、単一ID、単一API、単一LTE、単一保守PCに依存していないか。

- 通信異常、出力挙動、API異常、ログイン異常、更新異常を、O&Mの通常監視項目として扱っているか。

このチェックリストは、セキュリティ専門家だけのものではありません。

EPCは設計図面に、O&Mは点検項目に、発電事業者は調達条件に、自家消費設備の担当者はBCPに、EV充電やEMSの事業者はサービス仕様に組み込むべきものです。

認証ラベルの確認と、アーキテクチャの確認を分けて考えることが、これからの実務では重要になります。

真に安全な分散型エネルギーシステムへ

これからの分散型エネルギーシステムに必要なのは、安全ラベルを否定することではありません。

むしろ、JC-STARのような制度は、最低限の品質を底上げし、調達時の確認を容易にするために必要です。

問題は、そのラベルを安全性の終点とみなしてしまうことです。

真に安全なシステムは、認証された部品を使いながら、認証された部品が壊れることも、侵害されることも、クラウドが止まることも、通信が切れることも、運用者がミスをすることも前提にします。

そのうえで、通信断でも継続運転できる。

クラウド停止でも局所運転できる。

上位のカレンダーや指令が取れなくても、ローカルの電圧、周波数、逆潮流、負荷状態を見ながら系統を荒らさない範囲で縮退できる。

必要な場合には、保護協調と単独運転防止を満たしたうえで系統連系側を切り離し、自家消費、BESS、重要負荷をローカルで支えられる。

一部侵害でも全体停止しない。

外部依存を最小化できる。

閉域運用を選べる。

ローカル制御に戻せる。

単純な構成を保てる。

小規模局所で隔離できる。

動的監視と運用改善を続けられる。

こうした能力を持ちます。

分散型電源の価値は、本来、分散していることにあります。

ところが、すべてを一つのクラウド、一つのID、一つのOTA、一つの管理画面に集めてしまえば、物理的には分散していても、論理的には中央集権化された脆いシステムになります。

分散型エネルギーの強さは、単に設備が各地にあることではなく、制御、判断、復旧、継続運転の能力も分散していることです。

結論: 認証の先にレジリエンスを置く

JC-STAR要件化、系統連系技術要件化、PCSやEMSへのセキュリティ要件導入は、時代に必要な流れです。

太陽光、蓄電池、EMS、EV充電器が電力システムの一部として動く以上、セキュリティを任意の努力目標にしておくことはできません。

サプライチェーンリスクや国家レベルの脅威を軽視することもできません。

しかし、エネルギーインフラに必要なのは、「認証済みだから安全」という安心ではありません。

必要なのは、認証を最低ラインとして受け入れたうえで、侵害されても全体崩壊しないDynamic Resilienceを設計することです。

Security by Designとは、強いパスワードや暗号化だけではなく、壊れ方まで設計することです。

Resilience by Architectureとは、障害や攻撃を完全に消すのではなく、影響を局所化し、縮退しながら、必要な運転を続ける構造を作ることです。

これからの太陽光発電、蓄電池、EMS、EV充電器に求められるのは、安全ラベルを貼った複雑なブラックボックスではありません。

説明可能で、攻撃面が小さく、疎結合で、ローカルに自律し、オフラインでも最低限動き、異常時には安全側へ縮退し、一部が破れても全体が崩れないシステムです。

認証は入口です。

入口を整えることは必要ですが、真の安全性は、構造、局所性、責任分界、継続運転能力によって決まります。

完全防御を約束することではなく、崩壊しない構造を作ること。

それがエネルギーIoT時代の本当のレジリエンスです。

参考情報

- IPA「セキュリティ要件適合評価及びラベリング制度(JC-STAR)」

- 資源エネルギー庁「分散型電源のサイバーセキュリティ対策の要件化に係る今後の対応について」第19回グリッドコード検討会 資料6

- 各一般送配電事業者の系統連系技術要件、託送供給等約款別冊、系統連系協議依頼票

- 太陽光発電設備向け遠隔監視機器への不正アクセスに関する公開情報

- OCPP、EV充電器、DER/VPP、エネルギーIoTのセキュリティに関する国内外の公開研究・ガイドライン

関連ページ